The Future

Today is my last day at Luminoso, and I won’t be able to update this copy of the site anymore. But ConceptNet will continue.

For future posts and updates, please head over to concepts.arborelia.net.

Today is my last day at Luminoso, and I won’t be able to update this copy of the site anymore. But ConceptNet will continue.

For future posts and updates, please head over to concepts.arborelia.net.

ConceptNet 5.8 has been released!

In this release, we’re focused on improving the maintainability of ConceptNet, with a few small but significant changes to the data. Here’s an overview of what’s changed.

You can now reach ConceptNet’s web site and API over HTTPS. There’s nothing about ConceptNet that particularly requires encryption, but the security of the Web as a whole would be better if every site could be reached through HTTPS, and we’re happy to go along with that.

One immediate benefit is that an HTTPS web page can safely make requests to ConceptNet’s API.

We now have ConceptNet set up with continuous integration using Jenkins and deployment using AWS Terraform. This should make new versions and fixes much easier to deploy, without a long list of things that must be done manually.

This should also allow us, soon, to update the instructions on how to run one’s own copy of the ConceptNet API without so much manual effort.

id) and Malay (ms)¶The use of the language code ms in earlier releases of ConceptNet 5 reflects our uncertainty about the scope of the ms language code. Some sources said it was a “macrolanguage” code for all Malay languages including Indonesian, so we implemented it similarly to the macrolanguage zh for Chinese languages. Thus, we formerly used ms to include both Indonesian (bahasa Indonesia) and the specific Malay language (bahasa Melayu).

This led to confusion of words that have different meanings or connotations in the two languages, and the appearance that Indonesian was missing from the language list. In retrospect, it’s better and more expected to represent the Indonesian language with its own language code, id.

So in version 5.8, we have separate support for Indonesian (id) and Malay (ms). This is the largest data change in ConceptNet 5.8. Fortunately, our largest sources of data for these languages (Open Multilingual WordNet and Wiktionary) have similar coverage of both languages.

Building ConceptNet involves a step that extracts knowledge from Wiktionary using our custom MediaWiki parser, wikiparsec. Wiktionary is a crowd-sourced dictionary that is developed separately in many languages — that is, the language that the definitions are in. Each of these languages of Wiktionary also defines words in hundreds of languages.

The practices around formatting Wiktionary entries change from time to time, so a parser for the English Wiktionary of 2019 won’t necessarily parse the English Wiktionary of 2020.

In fact, it doesn’t. The above is a problem we’ve run into. We can’t update the English Wiktionary entries until we account for some entirely new formatting that arose in the last year. But we can update the other Wiktionaries we parse, French and German, from the 2016 version to the 2020 version. That’s what we’ve done in this update, acquiring four years of fixes, new details, and new words.

There’s some crowdsourced data that shouldn’t appear in ConceptNet, and in 5.8 we’re doing more to filter it.

Previously, we’ve used some heuristics to filter bad answers that came from the game Verbosity, and a few particularly unhelpful contributors and topic areas in Open Mind Common Sense.

Recently, we and others have noticed some offensive word associations in ConceptNet that came from Wiktionary. The issue is that they came from definitions that are appropriate to find in a dictionary, but not elsewhere. A semantic network isn’t a dictionary, and one important difference is that the edges in ConceptNet appear with no context.

A dictionary can say “X is an offensive term that means Y, and here’s where it came from”. It could even have usage notes on why not to say it. That’s all part of a dictionary’s job, defining words no matter what they mean, so you can find out what they mean if you don’t know.

In ConceptNet, such an entry ends up as an edge between X and Y, which is the same as an edge between Y and X. So, unfortunately, looking up an ordinary word in ConceptNet could produce a list of hateful synonyms, and these word associations would also be learned by semantic models such as ConceptNet Numberbatch.

These links aren’t worth including in ConceptNet. Fortunately, in many cases, we can use the structure of Wiktionary to help tell us which edges to not include.

An update to our Wiktionary parser detects definitions that are labeled on Wiktionary as “offensive” or “slur” or similar labels, and produces metadata that the build process can use to exclude that definition. With an expansion of the “blocklist”, the file that we use to manually exclude edges, we can also cover cases that aren’t consistently labeled in Wiktionary.

The filtering doesn’t just have to be about offensive terms: we also use the same mechanism to filter definitions that Wiktionary calls archaic and obsolete, definitions that would not help understand the modern usage of a word, without affecting other senses of the word.

While I looked through a lot of unfortunate words to check that the filtering had done the right thing, I know I didn’t look at everything, and also I can only really do this in English. If you see ConceptNet edges that should be filtered out, feel free to let me know in an e-mail. With continuous integration, I should even be able to fix it in a timely manner.

This filtering caused no significant change in our semantic benchmarks. As they say, “nothing of value was lost”.

It was during the life of ConceptNet 5.7 that I learned that people actually do use ExternalURL edges to connect ConceptNet to other Linked Open Data resources. And one user pointed out to me how the presentation of them was… neglected.

If you’re browsing ConceptNet’s web interface, the external links used to appear as one of the relation types, which led to them being crammed into a format that didn’t really work for them, and also put them in an arbitrary place on the page depending on how many of them there were. Now, the external links appear in a differently-formatted section at the bottom.

We also now filter the ExternalURLs to only include terms that exist in ConceptNet, instead of having isolated terms that only appear in an ExternalURL.

It’s been a while since we made a release of ConceptNet Numberbatch. Here, have some new word embeddings.

ConceptNet Numberbatch is our whimsical double-dactyl name for pre-computed word embeddings built using ConceptNet and distributional semantics. The things we’ve been doing with it at Luminoso have benefited from some improvements we’ve made in the last two years.

The last release we announced was in 2017. Since then, we made a few improvements for SemEval 2018, when we demonstrated how to distinguish attributes using ConceptNet.

But meanwhile, outside of Luminoso, we’ve also seen some great things being built with what we released:

Alex Lew’s Robot Mind Meld uses ConceptNet Numberbatch to play a cooperative improv game.

Tsun-Hsien Tang and others showed how Numberbatch can be combined with image recognition to better retrieve images of daily life.

Sophie Siebert and Frieder Stolzenburg developed CoRg, a reasoning / story understanding system that combines Numberbatch word embeddings with theorem proving, a combination I wouldn’t have expected to see at all.

I hope we can see more projects like this by releasing our improvements to Numberbatch.

We added a step to the build process of ConceptNet Numberbatch called “propagation”. This makes it easier to use Numberbatch to represent a larger vocabulary of words, especially in languages with more inflections than English.

Previously, there were a lot of terms that we didn’t have vectors for, especially word forms that aren’t the most commonly-observed form. We had to rely on the out-of-vocabulary (OOV) strategy to handle these words, by looking up their neighboring terms in ConceptNet that did have vectors. This strategy was hard to implement, because it required having access to the ConceptNet graph at all times.

I know that many projects that attempted to use Numberbatch simply skipped the OOV strategy, so any word that wasn’t directly in the vocabulary just couldn’t be represented, and this led to suboptimal results.

With the “propagation” step, we pre-compute the vectors for more words, especially forms of known words.

This increases the vocabulary size and the space required to use Numberbatch, but leaves us with a simple, fast OOV strategy that doesn’t need to refer to the whole ConceptNet graph. And it should improve the results greatly for users of Numberbatch who aren’t using an OOV strategy at all.

Word embeddings are a useful tool for a lot of NLP tasks, but by now we’ve seen lots of evidence of a risk they carry: when they capture word meanings from the ways we use words, they also capture harmful biases and stereotypes. A clear, up-to-date paper on this is “What are the biases in my word embedding?”, by Nathaniel Swinger et al.

It’s important to do what we can to mitigate that. Machine learning involves lots of ethical issues, and we can’t solve them all while not knowing how you’re even going to use our word embeddings, but we can at least try not to publish something that makes the ethical problems worse. So one of the steps in building ConceptNet Numberbatch is algorithmic de-biasing that tries to identify and mitigate these biases.

(If you want to point out that algorithmic de-biasing is insufficient to solve the problem, you are very right, but that doesn’t mean we shouldn’t do it.)

Chris Sweeney and Maryam Najafian published a new framework for assessing fairness in word embeddings. Their framework doesn’t assume that biases are necessarily binary (men vs. women, white vs. black) or can be seen in a linear projection of the embeddings, as previous metrics did. This assessment comes out looking pretty good for Numberbatch, which associates nationalities and religions with sentiment words more equitably than other embeddings.

Please note that you can’t be assured that your AI project is ethical just because it has one fairer-than-usual component in it. We have not solved AI ethics. You still need to test for harmful effects in the outputs of your actual system, as well as making sure that its inputs are collected ethically.

You can find download links and documentation for the new version on the conceptnet-numberbatch GitHub page.

The Python code that builds the embeddings is in conceptnet5.vectors, part of the conceptnet5 repository.

ConceptNet 5.7 has been released! Here’s a tour of some of the things that are new.

The work of Naoki Otani, Hirokazu Kiyomaru, Daisuke Kawahara, and Sadao Kurohashi has expanded and improved ConceptNet’s crowdsourced knowledge in Japanese.

This group, from Carnegie Mellon and Kyoto University with support from Yahoo! Japan, combined translation and crowdsourcing to collect 18,747 new facts in Japanese, covering common-sense relations that are hard to collect data for, such as /r/AtLocation and /r/UsedFor.

You can read the details in their COLING paper, “Cross-lingual Knowledge Projection Using Machine Translation and Target-side Knowledge Base Completion“.

Sometimes multiple different meanings happen to be represented by words that are spelled the same — that is, different senses of a word. ConceptNet has vaguely supported word senses for a while, and now we’re making more of an effort to actually support them.

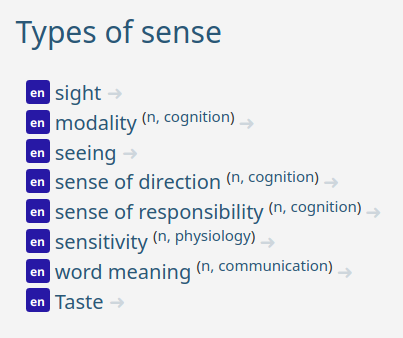

In earlier versions, we only distinguished word senses by their part of speech: for example, the noun “sense” might be at the ConceptNet URI /c/en/sense/n. Now we can provide more details about word senses when we have them, such as /c/en/sense/n/wn/communication, representing a noun sense of “sense” that’s in the WordNet topic area of “communication”.

Of course, a lot of ConceptNet’s data comes from natural language text, where distinguishing word senses is a well-known difficult problem. A lot of the data is still made of ambiguous words. But when we take input from sources that do have distinguishable word senses, we include information about the word senses in the concept URI and in its display on the browseable ConceptNet site.

Specific word senses always appear in a namespace, letting us know who’s defining that word sense, such as WordNet, Wiktionary, or Wikipedia.

Why the different namespaces? Why not have one vocabulary of word senses? Well, I’ve never seen a widely-accepted vocabulary of word senses. Every data source and every word-sense-disambiguation project has its own word senses, and there’s not even agreement on what it takes to say that two word senses are different.

Some word senses in different namespaces probably ought to be the same sense. But with different sources distinguishing word senses in different ways, and no clear way to align them, the best we can do is to distinguish what we can from each data source, and accept that some of them should be overlapping.

ConceptNet 5.7 is powered by PostgreSQL 10, and between the browseable site and the API, it is queried over 250,000 times per day.

Previously, we had to write carefully-tuned SQL queries so that the server could quickly respond to all the kinds of intersecting queries that the ConceptNet API allows, such as all HasA edges between two nodes in Japanese. Some combinations were previously not supported at all, such as all statements collected by Open Mind Common Sense about the Spanish word hablar.

Some of the queries were hard to tune. There was some downtime on ConceptNet 5.6 as users started making more difficult queries and the database failed to keep up. The corners we cut to make the queries efficient enough showed up as strange artifacts, such as queries where most of the results would start with “a” or “b”.

With our new database structure, we no longer have to anticipate and tune every kind of query. ConceptNet queries are now converted into JSONB queries, which PostgreSQL 10 knows how to optimize with a new kind of index. This gives us an efficient way to do every kind of ConceptNet query, using the work of people who are much better than us at optimizing databases.

Those are the major updates we’ve made to ConceptNet, so I’d like to wrap up by discussing why it’s important that we continue to maintain and improve ConceptNet. It continues to play a valuable role in understanding what people mean by the words they use.

In this area where it seems that machine learning can learn anything from enough data, it turns out that computers still do gain more common-sense understanding from ConceptNet. In the story understanding task at SemEval 2018, the best and fourth-best system both took input from ConceptNet. We previously wrote about how our ConceptNet-based system was the second-best at another SemEval task, on recognizing differences in attributes. In November 2018, the new state-of-the-art in the Story Cloze Test was set by Jiaao Chen et al. using ConceptNet, outperforming major systems such as GPT.

If you’re using ConceptNet in natural language processing, you should consider applying to the Common Sense In NLP workshop that I’m co-organizing at EMNLP 2019.

In a previous post, we mentioned the good results that systems built using ConceptNet got at SemEval this year. One of those systems was our own entry to the “Capturing Discriminative Attributes” task, about determining differences in meanings between words.

The system we submitted got second place, by combining information from ConceptNet, WordNet, Wikipedia, and Google Books. That system has some messy dependencies and fiddly details, so in this tutorial, we’re going to build a much simpler version of the system that also performs well.

Our poster, a prettier version of our SemEval paper, mainly presents the full version of the system, the one that uses five different methods of distinguishing attributes and combines them all in an SVM classifier. But here, I particularly want you to take note of the “ConceptNet is all you need” section, describing a simpler version we discovered while evaluating what made the full system work.

It seems that, instead of using five kinds of features, we may have been able to do just as well using just the pre-trained embeddings we call ConceptNet Numberbatch. So we’ll build that system here, using the ConceptNet Numberbatch data and a small amount of code, with only common dependencies (pandas and sklearn).

from sklearn.metrics import f1_score

import numpy as np

import pandas as pd

I want you to be able to reproduce this result, so I’ve put the SemEval data files, along with the exact version of ConceptNet Numberbatch we were using, in a zip file on my favorite scientific data hosting service, Zenodo.

These shell commands should serve the purpose of downloading and extracting that data, if the wget and unzip commands are available on your system.

!wget https://zenodo.org/record/1289942/files/conceptnet-distinguishing-attributes-data.zip

!unzip conceptnet-distinguishing-attributes-data.zip

In our actual solution, we imported some utilities from the ConceptNet5 codebase. In this simplified version, we’ll re-define the utilities that we need.

def text_to_uri(text):

"""

An extremely cut-down version of ConceptNet's `standardized_concept_uri`.

Converts a term such as "apple" into its ConceptNet URI, "/c/en/apple".

Only works for single English words, with no punctuation besides hyphens.

"""

return '/c/en/' + text.lower().replace('-', '_')

def normalize_vec(vec):

"""

Normalize a vector to a unit vector, so that dot products are cosine

similarities.

If it's the zero vector, leave it as is, so all its cosine similarities

will be zero.

"""

norm = vec.dot(vec) ** 0.5

if norm == 0:

return vec

return vec / norm

We would need a lot more support from the ConceptNet code if we wanted to apply ConceptNet’s strategy for out-of-vocabulary words. Fortunately, the words in this task are quite common. Our out-of-vocabulary strategy can be to return the zero vector.

class AttributeHeuristic:

def __init__(self, hdf5_filename):

"""

Load a word embedding matrix that is the 'mat' member of an HDF5 file,

with UTF-8 labels for its rows.

(This is the format that ConceptNet Numberbatch word embeddings use.)

"""

self.embeddings = pd.read_hdf(hdf5_filename, 'mat', encoding='utf-8')

self.cache = {}

def get_vector(self, term):

"""

Look up the vector for a term, returning it normalized to a unit vector.

If the term is out-of-vocabulary, return a zero vector.

Because many terms appear repeatedly in the data, cache the result.

"""

uri = text_to_uri(term)

if uri in self.cache:

return self.cache[uri]

else:

try:

vec = normalize_vec(self.embeddings.loc[uri])

except KeyError:

vec = pd.Series(index=self.embeddings.columns).fillna(0)

self.cache[uri] = vec

return vec

def get_similarity(self, term1, term2):

"""

Get the cosine similarity between the embeddings of two terms.

"""

return self.get_vector(term1).dot(self.get_vector(term2))

def compare_attributes(self, term1, term2, attribute):

"""

Our heuristic for whether an attribute applies more to term1 than

to term2: find the cosine similarity of each term with the

attribute, and take the difference of the square roots of those

similarities.

"""

match1 = max(0, self.get_similarity(term1, attribute)) ** 0.5

match2 = max(0, self.get_similarity(term2, attribute)) ** 0.5

return match1 - match2

def classify(self, term1, term2, attribute, threshold):

"""

Convert the attribute heuristic into a yes-or-no decision, by testing

whether the difference is larger than a given threshold.

"""

return self.compare_attributes(term1, term2, attribute) > threshold

def evaluate(self, semeval_filename, threshold):

"""

Evaluate the heuristic on a file containing instances of this form:

banjo,harmonica,stations,0

mushroom,onions,stem,1

Return the macro-averaged F1 score. (As in the task, we use macro-

averaged F1 instead of raw accuracy, to avoid being misled by

imbalanced classes.)

"""

our_answers = []

real_answers = []

for line in open(semeval_filename, encoding='utf-8'):

term1, term2, attribute, strval = line.rstrip().split(',')

discriminative = bool(int(strval))

real_answers.append(discriminative)

our_answers.append(self.classify(term1, term2, attribute, threshold))

return f1_score(real_answers, our_answers, average='macro')

When we ran this solution, our latest set of word embeddings calculated from ConceptNet was ‘numberbatch-20180108-biased’. This name indicates that it was built on January 8, 2018, and acknowledges that we haven’t run it through the de-biasing process, which we consider important when deploying a machine learning system.

Here, we didn’t want to complicate things by adding the de-biasing step. But keep in mind that this heuristic would probably have some unfortunate trends if it were asked to distinguish attributes of people’s name, gender, or ethnicity.

heuristic = AttributeHeuristic('numberbatch-20180108-biased.h5')

The classifier has one parameter that can vary, which is the “threshold”: the minimum difference between cosine similarities that will count as a discriminative attribute. When we ran the training code for our full SemEval entry on this one feature, we got a classifier that’s equivalent to a threshold of 0.096. Let’s simplify that by rounding it off to 0.1.

heuristic.evaluate('discriminatt-train.txt', threshold=0.1)

When we were creating this code, we didn’t have access to the test set — this is pretty much the point of SemEval. We could compare results on the validation set, which is how we decided to use a combination of five features, where the feature you see here is only one of them. It’s also how we found that taking the square root of the cosine similarities was helpful.

When we’re just revisiting a simplified version of the classifier, there isn’t much that we need to do with the validation set, but let’s take a look at how it does anyway.

heuristic.evaluate('discriminatt-validation.txt', threshold=0.1)

But what’s really interesting about this simple heuristic is how it performs on the previously held-out test set.

heuristic.evaluate('discriminatt-test.txt', threshold=0.1)

It’s pretty remarkable to see a test accuracy that’s so much higher than the training accuracy! It should actually make you suspicious that this classifier is somehow tuned to the test data.

But that’s why it’s nice to have a result we can compare to that followed the SemEval process. Our actual SemEval entry got the same accuracy, 73.6%, and showed that we could attain that number without having any access to the test data.

Many entries to this task performed better on the test data than on the validation data. It seems that the test set is cleaner overall than the validation set, which in turn is cleaner than the training set. Simple classifiers that generalize well had the chance to do much better on the test set. Classifiers which had the ability to focus too much on the specific details of the training set, some of which are erroneous, performed worse.

But you could still question whether the simplified system that we came up with after the fact can actually be compared to the system we submitted, which will leads me on a digression about “lucky systems” at the end of this post.

Let’s see how this heuristic does on some examples of these “discriminative attribute” questions.

When we look at heuristic.compare_attributes(a, b, c), we’re asking if a is more associated with c than b is. The heuristic returns a number. By our evaluation above, we consider the attribute to be discriminative if the number is 0.1 or greater.

Let’s start with an easy one: Most windows are made of glass, and most doors aren’t.

heuristic.compare_attributes('window', 'door', 'glass')

From the examples in the code above: mushrooms have stems, while onions don’t.

heuristic.compare_attributes('mushroom', 'onions', 'stem')

This one comes straight from the task description: cappuccino contains milk, while americano doesn’t. Unfortunately, our heuristic is not confident about the distinction, and returns a value less than 0.1. It would fail this example in the evaluation.

heuristic.compare_attributes('cappuccino', 'americano', 'milk')

An example of a non-discriminative attribute: trains and subways both involve rails. Our heuristic barely gets this right, but only due to lack of confidence.

heuristic.compare_attributes('train', 'subway', 'rails')

This was not required for the task, but the heuristic can also tell us when an attribute is discriminative in the opposite direction. Water is much more associated with soup than it is with fingers. It is a discriminative attribute that distinguishes soup from finger, not finger from soup. The heuristic gives us back a negative number indicating this.

heuristic.compare_attributes('finger', 'soup', 'water')

As a kid, I used to hold marble racing tournaments in my room, rolling marbles simultaneously down plastic towers of tracks and funnels. I went so far as to set up a bracket of 64 marbles to find the fastest marble. I kind of thought that running marble tournaments was peculiar to me and my childhood, but now I’ve found out that marble racing videos on YouTube are a big thing! Some of them even have overlays as if they’re major sporting events.

In the end, there’s nothing special about the fastest marble compared to most other marbles. It’s just lucky. If one ran the tournament again, the marble champion might lose in the first round. But the one thing you could conclude about the fastest marble is that it was no worse than the other marbles. A bad marble (say, a misshapen one, or a plastic bead) would never luck out enough to win.

In our paper, we tested 30 alternate versions of the classifier, including the one that was roughly equivalent to this very simple system. We were impressed by the fact that it performed as well as our real entry. And this could be because of the inherent power of ConceptNet Numberbatch, or it could be because it’s the lucky marble.

I tried it with other thresholds besides 0.1, and some of the nearby reasonable threshold values only score 71% or 72%. But that still tells you that this interestingly simple system is doing the right thing and is capable of getting a very good result. It’s good enough to be the lucky marble, so it’s good enough for this tutorial.

Incidentally, the same argument about “lucky systems” applies to SemEval entries themselves. There are dozens of entries from different teams, and the top-scoring entry is going to be an entry that did the right thing and also got lucky.

In the post-SemEval discussion at ACL, someone proposed that all results should be Bayesian probability distributions, estimated by evaluating systems on various subsets of the test data, and instead of declaring a single winner or a tie, we should get probabilistic beliefs as results: “There is an 80% chance that entry A is the best solution to the task, an 18% chance that entry B is the best solution…”

I find this argument entirely reasonable, and probably unlikely to catch on in a world where we haven’t even managed to replace the use of p-values.